Turn Research into Evidence You Can Check

Every chapter so far has used the for meetings, projects, decisions, and commitments. These are work logistics: the system helps you prepare, decide, follow through, and review. This chapter extends the same architecture to a different kind of work: research and learning. When you explore a question over days or weeks, the can hold the evidence, track what it supports, and mark what is still uncertain.

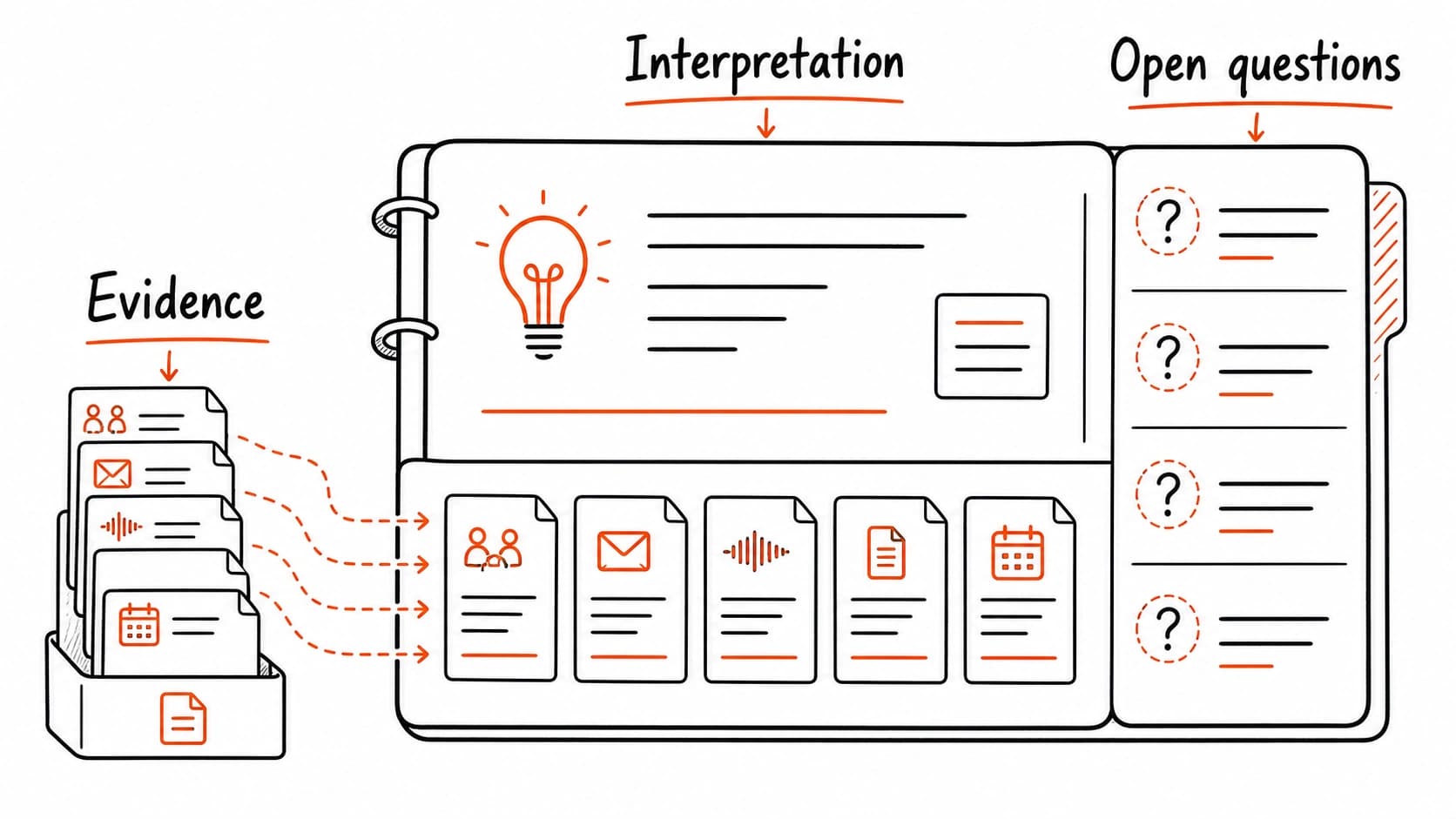

A research file is a collection of source cards organized around one question. Each card carries a claim from the source, the evidence behind it, the uncertainty you noticed, and a note on how useful the source might be. Together, the cards produce a picture: what do my sources say, where do they agree, where do they disagree, and what do I still not know?

Research uses the same , with a different question

In the meeting chapters, the question was practical: what do I need before this call? In research, the question is epistemic: what do my sources support, and what is still inference? The works the same way. Each claim points to a source. Each source has a date, , and access label. The difference is what you do with the answer. A meeting prep packet leads to follow-through. A research file leads to understanding.

The fields stay familiar. Each card records where the source came from, when it appeared, and what it carries. Research adds three fields: the claim the source makes, the evidence it offers for that claim, and the uncertainty you notice.

Walkthrough: build a research file for the pilot scope question

The onboarding pilot has an open question: should the scope stay at two clients or expand to four? The decision ledger records this as a tentative entry. To make a better decision, you need evidence beyond what the meeting and email provided. Start a research file around the question: "what does the evidence say about pilot scope for client onboarding changes?"

Gather three sources. The first is the industry report on onboarding practices, which claims self-service onboarding reduces time-to-value. The second is an internal memo from a previous pilot at the company, which describes what happened when a similar change was tested with five clients. The third is an email from the client who requested expansion to four, which explains their reasoning.

Create a for each one. The industry report carries a public claim with self-reported data and no control group. The internal memo carries private evidence from a real pilot, with names and results that are approval-only. The client email carries a preference, not evidence, and is also approval-only. Each source adds something different: external benchmarks, internal precedent, and stakeholder preference.

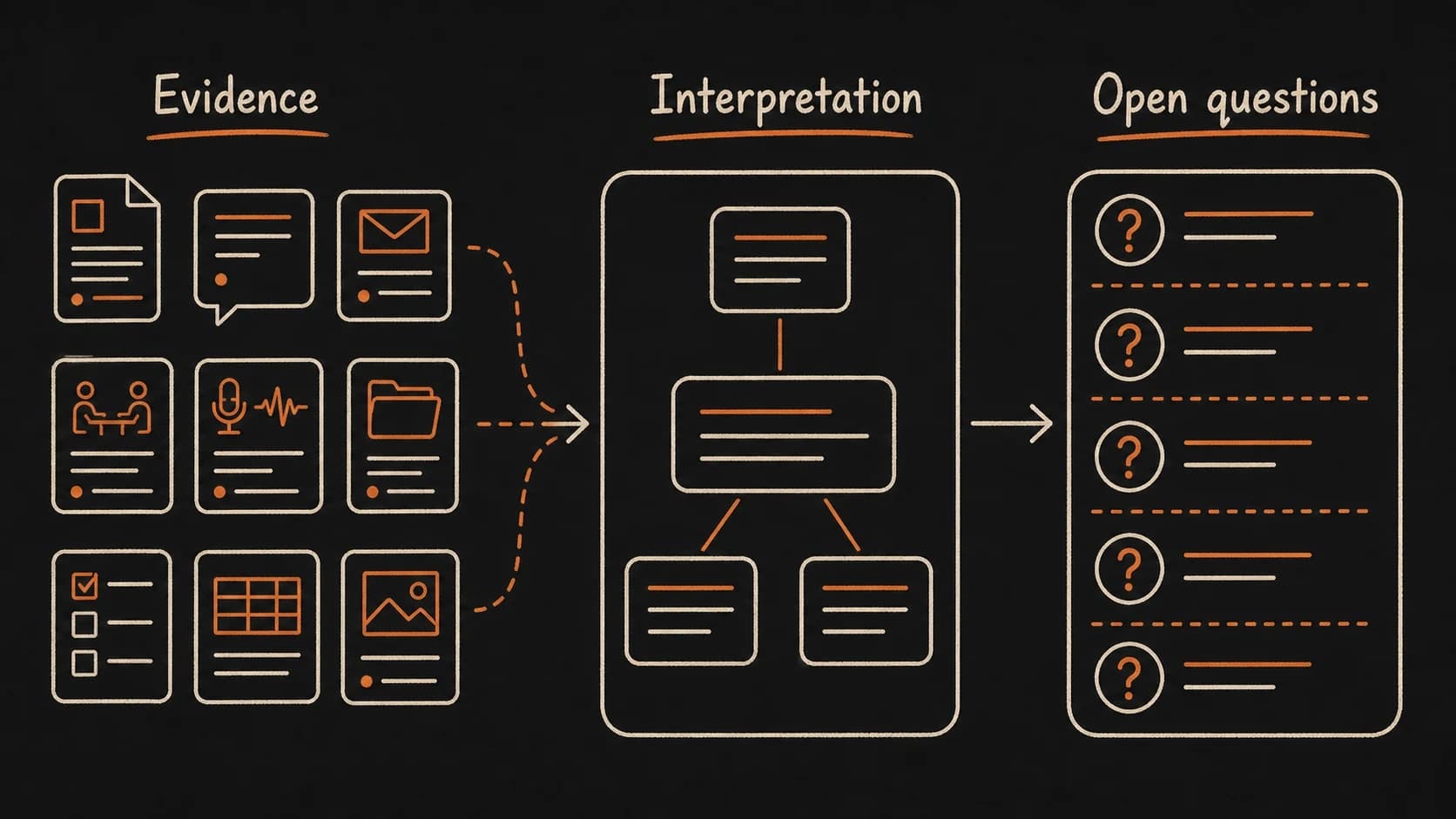

Now lay the three cards side by side and separate what the sources say (evidence) from what you conclude (interpretation). The evidence: one survey supports faster onboarding, one internal pilot found quality issues at scale, one client wants broader testing. The interpretation: expanding to four clients carries risk because the internal precedent showed problems at that scale, even though the client and the industry report favor more testing. The open question: what specifically went wrong in the previous pilot, and does the new intake form address those problems?

Separating evidence from interpretation is the core research skill

The most common research failure is treating interpretation as evidence. "The data suggests we should keep the pilot small" sounds like a finding. In practice, it is a conclusion drawn from the internal memo (evidence) combined with a judgment about risk (interpretation). The research file should make this distinction visible: the memo says what happened; you judge what it means for the current decision.

When the research file separates these layers, the reader can agree with your evidence and disagree with your interpretation. They can bring a new source that changes the interpretation without discarding the evidence. They can check whether the interpretation still holds after conditions change. When the layers are blended, challenging the conclusion requires re-reading every source from scratch.

Open questions are the most valuable part of the file

A finished research file does not need to resolve every question. The open questions at the end are often more useful than the conclusions. They tell you where the evidence stops and judgment begins. They name the next source that would change the picture. They prevent you from treating a partially supported interpretation as a settled finding.

For the pilot scope file, the open questions might include: What specifically failed in the previous five-client pilot? Does the new intake form address those failure points? Would the two clients in the current pilot generate enough feedback to judge the form fairly? Each question points toward a next source: the previous pilot's post-mortem, the design review for the new form, and the client's feedback expectations.

Build a research file for one open question

Claude reads your sources, builds source cards, and separates what the evidence says from what you conclude.